Rewriting the Engine While It Is Running

Over the last four days this project has changed so rapidly that the changelog is effectively obsolete. What started as a single Python script with hard coded personas has turned into a layered multi agent narrative control system written in Go, running entirely on my home workstation, streaming structured session data into production OpenSearch.

This post resets everything. It documents what exists today, how it works, and what I am experimenting with.

From Script to System

Four days ago the system looked like this:

- One Python loop

- Hard coded personas

- Static prompts

- One model

- No cognitive state

- No objective adjustment

- No entropy lifecycle

It generated text. That was it.

Today it is modularized in Go:

- controller

- llm

- storage

- persona

- config

It now supports:

- Multi model role separation

- Structured reflection compression

- Deterministic objective progression

- RSS entropy injection with lifecycle control

- Turn level OpenSearch indexing

- Live raw feed streaming interface

The conceptual shift was simple. Stop asking how to make it write better. Start asking how to control narrative progression structurally.

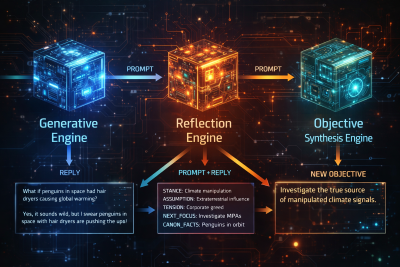

High Level Architecture

At runtime the program initializes:

- Generation model client

- Reflection model client

- Objective synthesis model client

- MariaDB entropy source

- Optional OpenSearch indexing client

- Persona definitions loaded from YAML

Each simulation is driven by RunDynamicConversation. Each turn performs deterministic orchestration across generation, reflection, objective synthesis, and indexing.

- Select next speaker based on recent history

- Optionally inject RSS entropy

- Build structured prompt

- Generate reply

- Compress state via reflection

- Advance objective via synthesis

- Index turn to OpenSearch

- Stream to raw feed interface

The Heart of the System

Below is the core controller. This is really the heart of the system. It is where turn selection, entropy injection, reflection compression, objective synthesis, and indexing converge.

package controller

import (

"encoding/json"

"fmt"

"math/rand"

"strings"

"time"

"llm_persona_simulator/modules/llm"

"llm_persona_simulator/modules/storage"

)

type Turn struct {

PersonaName string

TurnNumber int

Response string

}

type SessionResult struct {

Turns []Turn

}

type TurnIndexDoc struct {

SessionID string `json:"session_id"`

SimTitle string `json:"sim_title"`

TurnNumber int `json:"turn_number"`

Speaker string `json:"speaker"`

Entropy int `json:"entropy"`

PromptText string `json:"prompt_text"`

ReplyText string `json:"reply_text"`

ReflectionText string `json:"reflection_text,omitempty"`

CompressionText string `json:"compression_text,omitempty"`

ExternalSignal map[string]interface{} `json:"external_signal,omitempty"`

Timestamp time.Time `json:"timestamp"`

GenerationModel string `json:"generation_model"`

CriticModel string `json:"critic_model"`

Objective string `json:"objective"`

ObjectiveVersion int `json:"objective_version"`

}

func RunDynamicConversation(

client *llm.Client,

reflectClient *llm.Client,

objectiveClient *llm.Client, // NEW

osClient *storage.OpenSearchClient,

entropySource storage.EntropySource,

states []storage.PersonaState,

topic string,

totalTurns int,

contextWindow int,

injectNews bool,

fullControl bool,

simTitle string,

) (*SessionResult, error) {

existingMemory := ""

entropyInterval := 4

sessionID := fmt.Sprintf("session-%d", time.Now().UnixNano())

if len(states) == 0 {

return nil, fmt.Errorf("no personas provided")

}

rand.Seed(time.Now().UnixNano())

contextHistory := []Turn{}

fullTranscript := []Turn{}

currentObjective := topic

objectiveVersion := 1

for turn := 0; turn < totalTurns; turn++ {

turnNumber := turn + 1

fmt.Printf("\n============================================================\n")

fmt.Printf(">>> TURN_START:%d <<<\n", turnNumber)

fmt.Printf("============================================================\n")

nextSpeaker := selectNextSpeaker(states, fullTranscript)

fmt.Printf("TURN:%d | SPEAKER:%s\n", turnNumber, nextSpeaker.Name)

var externalSignal *storage.RSSItem

if injectNews && entropySource != nil {

shouldInject := false

if turn == 0 {

shouldInject = true

} else if entropyInterval > 0 && turnNumber%entropyInterval == 0 {

shouldInject = true

}

if shouldInject {

rss, err := entropySource.GetNext()

if err == nil && rss != nil {

externalSignal = rss

fmt.Printf("ENTROPY:%d | %s\n", turnNumber, rss.Title)

// ---- MARK RSS AS USED ----

if err := entropySource.MarkUsed(rss.ID); err != nil {

fmt.Printf("WARNING: failed to mark RSS item %d as used: %v\n", rss.ID, err)

}

}

}

}

fullPrompt := buildPrompt(

nextSpeaker.PersonaText,

nextSpeaker.CoreInvariant,

currentObjective,

existingMemory,

externalSignal,

fullControl,

)

fmt.Printf("\n--- PROMPT_BEGIN (TURN:%d) ---\n", turnNumber)

fmt.Printf("%s\n", fullPrompt)

fmt.Printf("--- PROMPT_END (TURN:%d) ---\n\n", turnNumber)

reply, err := client.Generate(fullPrompt)

if err != nil {

return nil, fmt.Errorf("LLM error for %s: %w", nextSpeaker.Name, err)

}

fmt.Printf("--- REPLY_BEGIN (TURN:%d | %s) ---\n", turnNumber, nextSpeaker.Name)

fmt.Printf("%s\n", reply)

fmt.Printf("--- REPLY_END (TURN:%d) ---\n\n", turnNumber)

// ---- Reflection Pass ----

directive := ""

if reflectClient != nil {

d, err := RunReflectionPass(

reflectClient,

nextSpeaker.CoreInvariant,

reply,

)

if err == nil && d != "" {

directive = d

existingMemory = directive

fmt.Println("------------------------------------------------------------")

fmt.Println("DIRECTIVE_UPDATE")

fmt.Println(directive)

fmt.Println("------------------------------------------------------------")

}

}

// ---- Objective Synthesis (Every 5 Turns) ----

if objectiveClient != nil {

newObjective, changed := SynthesizeObjective(

objectiveClient,

currentObjective,

existingMemory, // structured state only

turnNumber,

)

if changed {

fmt.Println("============================================================")

fmt.Println("SYSTEM_ESCALATION")

fmt.Printf("Previous Objective (v%d):\n%s\n\n", objectiveVersion, currentObjective)

currentObjective = newObjective

objectiveVersion++

fmt.Printf("New Objective (v%d):\n%s\n", objectiveVersion, currentObjective)

fmt.Println("============================================================")

}

}

newTurn := Turn{

PersonaName: nextSpeaker.Name,

TurnNumber: turnNumber,

Response: reply,

}

fullTranscript = append(fullTranscript, newTurn)

contextHistory = append(contextHistory, newTurn)

fmt.Printf(">>> TURN_END:%d <<<\n", turnNumber)

fmt.Printf("============================================================\n")

doc := TurnIndexDoc{

SessionID: sessionID,

SimTitle: simTitle,

TurnNumber: turnNumber,

Speaker: nextSpeaker.Name,

PromptText: fullPrompt,

ReplyText: reply,

ReflectionText: directive,

CompressionText: existingMemory,

GenerationModel: client.ModelName(),

CriticModel: reflectClient.ModelName(),

Timestamp: time.Now(),

Objective: currentObjective,

ObjectiveVersion: objectiveVersion,

}

if osClient != nil {

_ = osClient.IndexDocument(doc)

} else {

jsonDoc, _ := json.MarshalIndent(doc, "", " ")

fmt.Println(string(jsonDoc))

}

}

return &SessionResult{

Turns: fullTranscript,

}, nil

}

func selectNextSpeaker(

states []storage.PersonaState,

history []Turn,

) storage.PersonaState {

if len(history) == 0 {

return states[rand.Intn(len(states))]

}

lastSpeaker := history[len(history)-1].PersonaName

candidates := []storage.PersonaState{}

for _, s := range states {

if s.Name == lastSpeaker {

continue

}

if len(history) >= 2 {

secondLast := history[len(history)-2].PersonaName

if secondLast == s.Name {

continue

}

}

candidates = append(candidates, s)

}

if len(candidates) == 0 {

for _, s := range states {

if s.Name != lastSpeaker {

candidates = append(candidates, s)

}

}

}

return candidates[rand.Intn(len(candidates))]

}

func buildPrompt(

personaText string,

coreInvariant string,

objective string,

memory string,

externalSignal *storage.RSSItem,

fullControl bool,

) string {

prompt := ""

prompt += "SYSTEM:\n"

prompt += personaText + "\n\n"

prompt += "CORE INVARIANT (Immutable):\n"

prompt += coreInvariant + "\n\n"

if memory != "" {

prompt += "COGNITIVE STATE SNAPSHOT:\n"

prompt += memory + "\n\n"

}

if objective != "" {

prompt += "GLOBAL OBJECTIVE:\n"

prompt += objective + "\n\n"

}

// ---- RESTORE ENTROPY ----

if externalSignal != nil {

prompt += "EXTERNAL SIGNAL (Primary Focus):\n"

prompt += fmt.Sprintf("Title: %s\n", externalSignal.Title)

if externalSignal.Category != "" {

prompt += fmt.Sprintf("Category: %s\n", externalSignal.Category)

}

if externalSignal.PublishedAt != nil {

prompt += fmt.Sprintf("Published: %s\n", externalSignal.PublishedAt.Format(time.RFC3339))

}

if externalSignal.Description != "" {

prompt += fmt.Sprintf("Summary: %s\n", externalSignal.Description)

}

prompt += "\nENGAGEMENT REQUIREMENTS:\n"

prompt += "- Treat the EXTERNAL SIGNAL as materially relevant.\n"

prompt += "- Derive at least one structural implication.\n"

prompt += "- Preserve your Core Invariant.\n"

prompt += "- Maintain alignment with the GLOBAL OBJECTIVE unless governance mode justifies modification.\n\n"

}

if fullControl {

prompt += "OBJECTIVE GOVERNANCE MODE: ENABLED\n\n"

prompt += "You may propose a change to the GLOBAL OBJECTIVE ONLY if a MAJOR IRREVERSIBLE EVENT has occurred.\n"

prompt += "A major irreversible event is defined as:\n"

prompt += "- A fundamental shift in setting\n"

prompt += "- A character death or transformation\n"

prompt += "- Discovery that invalidates the current objective\n"

prompt += "- A structural escalation that makes the current objective obsolete\n\n"

prompt += "If no such event has occurred, you must NOT propose a new objective.\n\n"

prompt += "If you do propose a change, use this exact format:\n\n"

prompt += "OBJECTIVE_PROPOSAL:\n"

prompt += "NewObjective: \n"

prompt += "Justification: \n\n"

prompt += "Rules:\n"

prompt += "- Only one proposal per response.\n"

prompt += "- You may not modify your Core Invariant.\n"

prompt += "- Do not reference simulation mechanics or scoring.\n"

prompt += "- Do not restate established setting or events unless they have materially changed.\n"

prompt += "- Advance the scene from the last known point.\n"

prompt += "- Do not restate or refine the current objective unless it is structurally invalidated.\n\n"

}

prompt += "INSTRUCTION:\n"

if externalSignal != nil {

prompt += "Respond once. Focus primarily on analyzing the EXTERNAL SIGNAL while preserving invariant alignment.\n"

} else {

prompt += "Respond once while preserving invariant alignment and advancing the GLOBAL OBJECTIVE.\n"

}

return prompt

}

func extractObjectiveProposal(reply string) (string, bool) {

if !strings.Contains(reply, "OBJECTIVE_PROPOSAL:") {

return "", false

}

lines := strings.Split(reply, "\n")

for _, line := range lines {

if strings.HasPrefix(strings.TrimSpace(line), "NewObjective:") {

newObj := strings.TrimPrefix(line, "NewObjective:")

return strings.TrimSpace(newObj), true

}

}

return "", false

}

Everything else supports this loop.

Cognitive State and Canon Normalization

The reflection pass compresses each reply into structured continuity:

- STANCE

- ASSUMPTION

- TENSION

- NEXT_FOCUS

- CANON_FACTS

Canon facts are normalized into persistent world state. Completed actions become stable conditions. Transient actions are discarded.

This snapshot is injected into the next prompt as COGNITIVE STATE SNAPSHOT.

Without it, the system drifted. With it, the system maintains continuity across long sessions.

Deterministic Objective Progression

Originally personas could mutate the GLOBAL OBJECTIVE directly. That created instability. Large capitalized anchors dominate model attention. The objective became sacred and repetitive.

Now objective mutation is externalized. Each turn the objective synthesizer evaluates:

- Current objective

- Structured cognitive snapshot

- Turn index

Personas respond to the objective. They do not rewrite it.

Entropy Injection and Lifecycle Control

Entropy comes from a MariaDB backed RSS ingestion pipeline.

GetNext()

MarkUsed(id)Items are selected where used_in_debate = 0 and marked used after injection.

Each injection includes title, category, published timestamp, and cleaned description. HTML is stripped, entities decoded, whitespace normalized, and structural tokens sanitized.

Streaming to Production OpenSearch

Each turn produces a structured TurnIndexDoc containing:

- Session ID

- Simulation title

- Turn number

- Speaker

- Prompt text

- Reply text

- Reflection snapshot

- Objective version

- Model metadata

- Timestamp

If enabled, the document is indexed immediately. The workstation streams these across a WireGuard tunnel to the production OpenSearch node.

Generation and cognition run locally. Indexing and search run in production.

The Home Workstation Advantage

This entire system runs on my home office workstation. Multiple 7B and 8B models run concurrently. Generator, reflector, and objective synthesizer are all local.

There are no cloud inference calls. No token limits. No billing meter.

That freedom fundamentally changes the pace of experimentation.

The Raw Feed Interface Rewrite

The AI Agents Raw Feed interface was rewritten to function as an observability dashboard rather than a chat viewer.

- Live streaming of turns

- Prompt, reply, and reflection display

- Objective version tracking

- Entropy injection visibility

- Raw JSON inspection

- Session filtering

- Structural progression monitoring

Program Options

--config

--personas

--prompt

--cycles

--inject-news

--fullcontrol

--simtitleModels can be swapped independently for generation, reflection, and objective synthesis. The architecture is intentionally modular.

The Anvil Book

An Anvil book is in progress documenting how to build this system from scratch, how to design reflection compression, structure objective synthesis, separate governance from generation, and run everything locally without cloud dependency.

Where This Is Going

This started as a Python script. It is now a layered narrative control system.

Everything is local. Everything is indexed. Everything is observable.

The changelog is behind because the architecture has moved fast. But the structure is solid. And the experimentation continues.

--Always running at 200%

-Bryan